Fallom

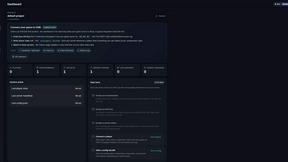

Fallom delivers elite, real-time observability and compliance for your AI agents and LLM operations.

Visit

About Fallom

Fallom is the definitive enterprise observability platform engineered exclusively for the age of AI. It provides mission-critical visibility into the complex, black-box nature of large language model (LLM) and autonomous agent workloads running in production. For elite engineering, data science, and compliance teams, Fallom transforms opaque AI operations into a transparent, manageable, and fully auditable system. Its core value lies in delivering unparalleled, end-to-end tracing of every LLM interaction, capturing granular details from prompts and outputs to tool calls, token usage, latency, and precise cost attribution. Beyond mere monitoring, Fallom provides essential business context by grouping traces by session, user, or customer, enabling rapid debugging and insightful analytics. Built with enterprise-grade compliance as a first principle, it features immutable audit trails, model versioning, and consent tracking to navigate stringent regulations like the EU AI Act and GDPR. With its OpenTelemetry-native SDK, integration is seamless, offering teams immediate live monitoring, powerful cost controls, and the confidence to scale sophisticated AI applications with reliability and fiscal precision.

Features of Fallom

End-to-End LLM Tracing

Fallom provides complete, real-time visibility into every LLM call within your agentic workflows. It captures a comprehensive telemetry dataset including the exact prompts submitted, the generated outputs, all intermediate tool or function calls with their arguments and results, token consumption, latency metrics, and the calculated cost for each interaction. This granular trace data is essential for debugging complex chains, understanding performance bottlenecks, and ensuring the deterministic behavior of AI systems in production environments.

Enterprise Cost Attribution & Governance

Gain absolute financial control over your AI expenditures. Fallom meticulously attributes costs across every dimension—by model, individual user, team, or even specific customer—providing full transparency for budgeting, forecasting, and internal chargeback. The platform's real-time dashboards display spend analytics, allowing leaders to monitor budgets, identify unexpected usage patterns, and optimize model selection to balance performance with cost-efficiency at scale.

Compliance-Ready Audit Trails

Engineered for regulated industries, Fallom automatically generates immutable, complete audit trails for all AI interactions. This includes logging of inputs and outputs, tracking of model versions used in each query, and recording user consent where required. These features are purpose-built to help organizations demonstrate compliance with evolving global standards like the EU AI Act, SOC 2, and GDPR, turning a complex regulatory challenge into a managed operational process.

Advanced Session & Performance Analytics

Move beyond isolated traces to understand the full user journey. Fallom intelligently groups related traces into sessions, providing context-rich views of complete user interactions. Coupled with timing waterfall visualizations, teams can dissect multi-step agent workflows to pinpoint exactly where latency occurs—whether in an LLM call, a tool execution, or internal logic—dramatically accelerating performance optimization and root cause analysis.

Use Cases of Fallom

Proactive AI Operations & Incident Response

Engineering and SRE teams use Fallom for live monitoring and alerting on their AI-powered applications. By observing real-time traces, latency metrics, and error rates, they can detect anomalies, performance degradation, or hallucination spikes before they impact end-users. When issues occur, the detailed trace context enables rapid triage and resolution, minimizing downtime and ensuring service reliability for critical AI features.

Financial Optimization & Showback

Finance and engineering leadership leverage Fallom's precise cost attribution to manage and forecast AI spend. By analyzing costs per model, team, or product feature, organizations can eliminate waste, make data-driven decisions on model selection (e.g., GPT-4 vs. GPT-4o-mini), and implement accurate showback or chargeback mechanisms to align resource usage with business unit budgets.

Regulatory Compliance & Risk Auditing

Compliance, legal, and security teams rely on Fallom to fulfill stringent regulatory requirements for AI systems. The platform's automated audit trails, consent tracking, and versioning provide the necessary evidence for audits. Privacy modes allow sensitive data to be redacted while maintaining full telemetry, enabling organizations to deploy AI confidently in healthcare, finance, and other regulated sectors.

AI Product Development & Evaluation

AI product managers and developers utilize Fallom for iterative improvement and safe deployment. Features like the Prompt Store enable version control and A/B testing of prompt variations. Integrated evaluations track key metrics like accuracy and hallucination rates across model versions, allowing teams to validate improvements, catch regressions, and roll out new models with confidence using controlled traffic splitting.

Frequently Asked Questions

How does Fallom integrate with my existing AI applications?

Fallom offers an OpenTelemetry-native SDK designed for seamless integration. You can instrument your LLM and agent code in under five minutes. The SDK is provider-agnostic, working with any model from OpenAI, Anthropic, Google, or open-source providers, ensuring zero lock-in and compatibility with your existing tech stack and workflows.

How does Fallom handle sensitive or private user data?

Fallom is built with enterprise-grade privacy controls. It offers a configurable Privacy Mode that allows you to disable full content capture for sensitive interactions. You can choose to log only metadata (like token counts and latency) or apply content redaction rules, ensuring you maintain full observability for debugging while protecting confidential user information and complying with data protection policies.

Can Fallom help us reduce our overall LLM costs?

Absolutely. Fallom provides the granular visibility necessary for cost optimization. By tracking spend per model, per feature, and per user, you can identify inefficiencies—such as overusing expensive models for simple tasks. Insights into token usage and performance allow you to right-size model selection, implement usage policies, and monitor the financial impact of changes in real time, leading to significant cost savings.

Is Fallom suitable for monitoring complex, multi-step AI agents?

Yes, this is a core strength of Fallom. It automatically traces the entire execution chain of sophisticated agents, visualizing each step—LLM calls, tool executions, and conditional logic—in a unified timeline or waterfall view. This end-to-end context is critical for debugging failures in long-running workflows, understanding the provenance of an output, and optimizing the overall latency and reliability of autonomous agent systems.

Explore more in this category:

Similar to Fallom

Subiq

Subiq eliminates wasted SaaS spend by giving small teams one place to track, manage, and optimize every subscription.

Game Server Backend

Game Server Backend eliminates backend complexity for multiplayer games with a single API unifying player auth, data, leaderboards, and server.

Flyback.ai

Flyback is the elite buy-side intelligence platform that uses AI to score luxury watch deals against verified sales data.

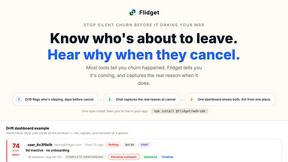

Flidget

Flidget predicts churn with drift scores and captures real exit reasons through an AI chat, all in one dashboard.

Headless Domains

Headless Domains provides portable, verifiable, machine-readable web identities so AI agents can prove their authority and trustworthiness across any.