Agenta vs Fallom

Side-by-side comparison to help you choose the right tool.

Agenta is a premier open-source platform for seamless LLM app development, centralizing prompts and enhancing.

Last updated: March 1, 2026

Fallom delivers elite, real-time observability and compliance for your AI agents and LLM operations.

Last updated: February 28, 2026

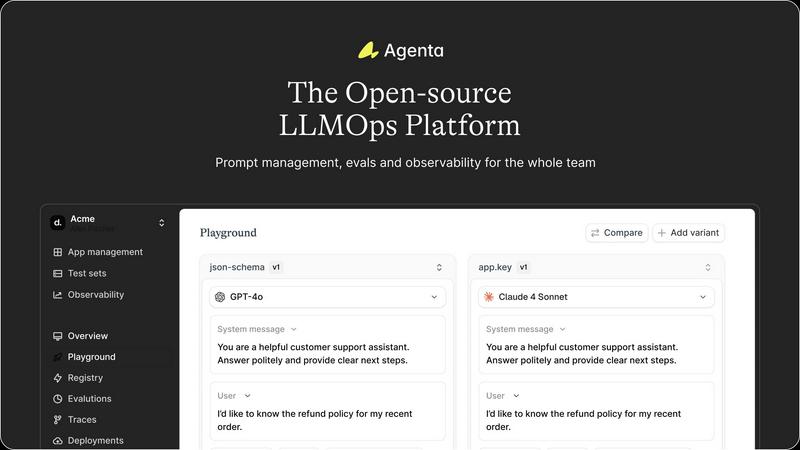

Visual Comparison

Agenta

Fallom

Feature Comparison

Agenta

Centralized Prompt Management

Agenta consolidates all prompts, evaluations, and traces within a single platform, eliminating the chaos of scattered resources. This centralization ensures that every team member has access to consistent and updated information, fostering collaboration and reducing the risk of errors.

Unified Playground for Experimentation

The unified playground allows teams to compare prompts and models side-by-side, facilitating a collaborative approach to experimentation. Users can save errors found in production to a test set and utilize them for further analysis, ensuring continuous improvement in model performance.

Automated Evaluation System

Agenta replaces guesswork with systematic evaluations by automating the process of running experiments, tracking results, and validating every change. This feature integrates seamlessly with any evaluator, including LLM-as-a-judge, enabling rigorous performance assessments.

Comprehensive Observability Tools

With advanced observability features, Agenta enables teams to trace every request and pinpoint failure points effectively. Users can annotate traces collaboratively and turn any trace into a test with a single click, closing the feedback loop and enhancing debugging efficiency.

Fallom

End-to-End LLM Tracing

Fallom provides complete, real-time visibility into every LLM call within your agentic workflows. It captures a comprehensive telemetry dataset including the exact prompts submitted, the generated outputs, all intermediate tool or function calls with their arguments and results, token consumption, latency metrics, and the calculated cost for each interaction. This granular trace data is essential for debugging complex chains, understanding performance bottlenecks, and ensuring the deterministic behavior of AI systems in production environments.

Enterprise Cost Attribution & Governance

Gain absolute financial control over your AI expenditures. Fallom meticulously attributes costs across every dimension—by model, individual user, team, or even specific customer—providing full transparency for budgeting, forecasting, and internal chargeback. The platform's real-time dashboards display spend analytics, allowing leaders to monitor budgets, identify unexpected usage patterns, and optimize model selection to balance performance with cost-efficiency at scale.

Compliance-Ready Audit Trails

Engineered for regulated industries, Fallom automatically generates immutable, complete audit trails for all AI interactions. This includes logging of inputs and outputs, tracking of model versions used in each query, and recording user consent where required. These features are purpose-built to help organizations demonstrate compliance with evolving global standards like the EU AI Act, SOC 2, and GDPR, turning a complex regulatory challenge into a managed operational process.

Advanced Session & Performance Analytics

Move beyond isolated traces to understand the full user journey. Fallom intelligently groups related traces into sessions, providing context-rich views of complete user interactions. Coupled with timing waterfall visualizations, teams can dissect multi-step agent workflows to pinpoint exactly where latency occurs—whether in an LLM call, a tool execution, or internal logic—dramatically accelerating performance optimization and root cause analysis.

Use Cases

Agenta

Collaborative Development for LLM Applications

Agenta is ideal for AI teams looking to collaborate effectively on LLM applications. By centralizing tools and resources, teams can work together in real time, iterating on prompts and sharing insights to build more reliable models.

Streamlined Debugging Processes

When issues arise in production, Agenta's observability tools allow teams to quickly identify and resolve failures. By tracing requests and analyzing failures systematically, teams can enhance the reliability of their LLM applications.

Evidence-Based Performance Evaluation

With Agenta, teams can conduct thorough evaluations of their models and prompts using automated and human evaluation methods. This evidence-based approach ensures that changes lead to measurable improvements in performance.

Integration with Existing Workflows

Agenta integrates seamlessly with popular frameworks and tools, such as LangChain and OpenAI, allowing organizations to leverage their current technology stack while enhancing their LLM development processes. This flexibility minimizes disruption and maximizes efficiency.

Fallom

Proactive AI Operations & Incident Response

Engineering and SRE teams use Fallom for live monitoring and alerting on their AI-powered applications. By observing real-time traces, latency metrics, and error rates, they can detect anomalies, performance degradation, or hallucination spikes before they impact end-users. When issues occur, the detailed trace context enables rapid triage and resolution, minimizing downtime and ensuring service reliability for critical AI features.

Financial Optimization & Showback

Finance and engineering leadership leverage Fallom's precise cost attribution to manage and forecast AI spend. By analyzing costs per model, team, or product feature, organizations can eliminate waste, make data-driven decisions on model selection (e.g., GPT-4 vs. GPT-4o-mini), and implement accurate showback or chargeback mechanisms to align resource usage with business unit budgets.

Regulatory Compliance & Risk Auditing

Compliance, legal, and security teams rely on Fallom to fulfill stringent regulatory requirements for AI systems. The platform's automated audit trails, consent tracking, and versioning provide the necessary evidence for audits. Privacy modes allow sensitive data to be redacted while maintaining full telemetry, enabling organizations to deploy AI confidently in healthcare, finance, and other regulated sectors.

AI Product Development & Evaluation

AI product managers and developers utilize Fallom for iterative improvement and safe deployment. Features like the Prompt Store enable version control and A/B testing of prompt variations. Integrated evaluations track key metrics like accuracy and hallucination rates across model versions, allowing teams to validate improvements, catch regressions, and roll out new models with confidence using controlled traffic splitting.

Overview

About Agenta

Agenta is an innovative open-source LLMOps platform meticulously crafted to empower AI teams to efficiently construct and deploy trustworthy large language model (LLM) applications. It is designed for developers, product managers, and domain experts who are seeking a collaborative environment to streamline their workflows. Agenta addresses the challenges organizations face with the unpredictable nature of LLMs, which often leads to scattered prompts, siloed workflows, and chaotic iterations. By centralizing the development processes, Agenta provides a single source of truth, enabling teams to foster collaboration, maintain version control, and implement evidence-based evaluations. The platform transforms the debugging experience into a systematic analysis through its integrated observability features, allowing teams to monitor performance and make informed decisions. With Agenta, organizations can significantly enhance their LLM development efforts, ensuring reliability and efficiency from experimentation to deployment.

About Fallom

Fallom is the definitive enterprise observability platform engineered exclusively for the age of AI. It provides mission-critical visibility into the complex, black-box nature of large language model (LLM) and autonomous agent workloads running in production. For elite engineering, data science, and compliance teams, Fallom transforms opaque AI operations into a transparent, manageable, and fully auditable system. Its core value lies in delivering unparalleled, end-to-end tracing of every LLM interaction, capturing granular details from prompts and outputs to tool calls, token usage, latency, and precise cost attribution. Beyond mere monitoring, Fallom provides essential business context by grouping traces by session, user, or customer, enabling rapid debugging and insightful analytics. Built with enterprise-grade compliance as a first principle, it features immutable audit trails, model versioning, and consent tracking to navigate stringent regulations like the EU AI Act and GDPR. With its OpenTelemetry-native SDK, integration is seamless, offering teams immediate live monitoring, powerful cost controls, and the confidence to scale sophisticated AI applications with reliability and fiscal precision.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps refers to the operational practices and tools used to manage the lifecycle of large language models. It encompasses activities such as development, deployment, monitoring, and debugging to ensure reliable and efficient LLM applications.

Can Agenta integrate with other AI frameworks?

Yes, Agenta is designed for compatibility and can integrate with various frameworks and models, including LangChain and OpenAI. This ensures that teams can use their preferred tools while benefiting from Agenta's capabilities.

Is Agenta suitable for teams of all sizes?

Absolutely. Agenta is built to accommodate teams of any size, from small startups to large enterprises. Its collaborative features and centralized management make it ideal for diverse team structures and workflows.

How does Agenta support version control?

Agenta maintains a complete version history of prompts and evaluations, allowing teams to track changes over time. This feature ensures that team members can revert to previous versions if necessary, enhancing collaboration and reducing the risk of errors.

Fallom FAQ

How does Fallom integrate with my existing AI applications?

Fallom offers an OpenTelemetry-native SDK designed for seamless integration. You can instrument your LLM and agent code in under five minutes. The SDK is provider-agnostic, working with any model from OpenAI, Anthropic, Google, or open-source providers, ensuring zero lock-in and compatibility with your existing tech stack and workflows.

How does Fallom handle sensitive or private user data?

Fallom is built with enterprise-grade privacy controls. It offers a configurable Privacy Mode that allows you to disable full content capture for sensitive interactions. You can choose to log only metadata (like token counts and latency) or apply content redaction rules, ensuring you maintain full observability for debugging while protecting confidential user information and complying with data protection policies.

Can Fallom help us reduce our overall LLM costs?

Absolutely. Fallom provides the granular visibility necessary for cost optimization. By tracking spend per model, per feature, and per user, you can identify inefficiencies—such as overusing expensive models for simple tasks. Insights into token usage and performance allow you to right-size model selection, implement usage policies, and monitor the financial impact of changes in real time, leading to significant cost savings.

Is Fallom suitable for monitoring complex, multi-step AI agents?

Yes, this is a core strength of Fallom. It automatically traces the entire execution chain of sophisticated agents, visualizing each step—LLM calls, tool executions, and conditional logic—in a unified timeline or waterfall view. This end-to-end context is critical for debugging failures in long-running workflows, understanding the provenance of an output, and optimizing the overall latency and reliability of autonomous agent systems.

Alternatives

Agenta Alternatives

Agenta is an innovative open-source platform specifically designed for large language model operations (LLMOps), catering to AI teams seeking to build and deploy reliable applications with unmatched efficiency. By centralizing the development process, Agenta promotes collaboration and streamlines experimentation, which is crucial for teams navigating the complexities of AI technologies. Users often seek alternatives to Agenta due to factors such as pricing, specific feature sets, or tailored platform needs that better align with their unique operational requirements. When choosing an alternative, it is essential to consider aspects such as ease of use, integration capabilities, support services, and the overall ability to meet the unique demands of your team’s AI development process.

Fallom Alternatives

Fallom is the definitive AI-native observability platform, engineered for the elite demands of enterprise-scale LLM and agent operations. It delivers unparalleled, real-time visibility into every facet of production AI, from granular call tracing to stringent compliance assurance. Organizations may explore alternatives for various strategic reasons, such as aligning with specific budget frameworks, integrating into an existing technology stack, or requiring a different balance between depth of features and implementation simplicity. The landscape offers varied approaches to monitoring and governance. When evaluating an alternative, discerning enterprises should prioritize solutions that offer genuine end-to-end traceability, robust cost attribution models, and ironclad compliance tooling. The platform must not only provide data but contextual, actionable intelligence that scales with your AI ambitions.