Agenta vs OpenMark AI

Side-by-side comparison to help you choose the right tool.

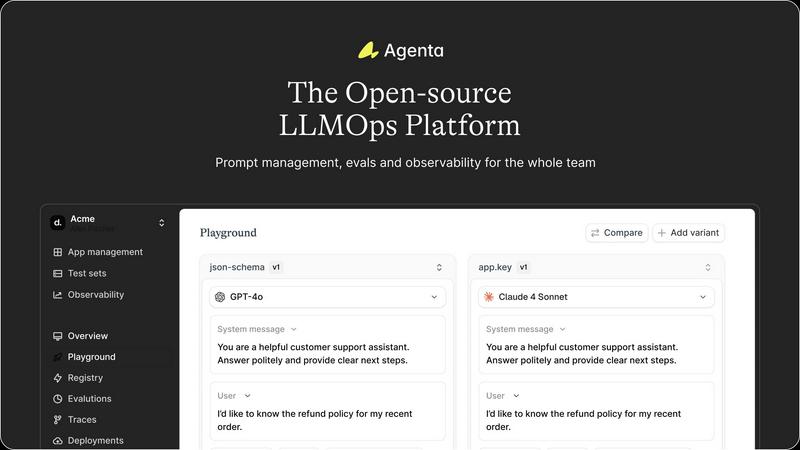

Agenta is a premier open-source platform for seamless LLM app development, centralizing prompts and enhancing.

Last updated: March 1, 2026

OpenMark AI effortlessly benchmarks over 100 LLMs on your specific tasks, revealing optimal models based on cost, speed, and quality.

Last updated: March 26, 2026

Visual Comparison

Agenta

OpenMark AI

Feature Comparison

Agenta

Centralized Prompt Management

Agenta consolidates all prompts, evaluations, and traces within a single platform, eliminating the chaos of scattered resources. This centralization ensures that every team member has access to consistent and updated information, fostering collaboration and reducing the risk of errors.

Unified Playground for Experimentation

The unified playground allows teams to compare prompts and models side-by-side, facilitating a collaborative approach to experimentation. Users can save errors found in production to a test set and utilize them for further analysis, ensuring continuous improvement in model performance.

Automated Evaluation System

Agenta replaces guesswork with systematic evaluations by automating the process of running experiments, tracking results, and validating every change. This feature integrates seamlessly with any evaluator, including LLM-as-a-judge, enabling rigorous performance assessments.

Comprehensive Observability Tools

With advanced observability features, Agenta enables teams to trace every request and pinpoint failure points effectively. Users can annotate traces collaboratively and turn any trace into a test with a single click, closing the feedback loop and enhancing debugging efficiency.

OpenMark AI

Intuitive Task Configuration

OpenMark AI offers an intuitive interface that allows users to describe their benchmarking tasks in plain language. This feature eliminates the need for complex coding or setup, enabling rapid deployment of tests across dozens of models.

Real-Time Model Comparisons

With OpenMark AI, users can benchmark over 100 AI models simultaneously with side-by-side results. This feature provides real-time insights into various models' performance, ensuring informed decision-making based on actual API calls instead of cached data.

Cost and Quality Analysis

OpenMark AI emphasizes the importance of cost efficiency by enabling users to compare the quality of outputs relative to the actual costs incurred during API calls. This feature lets teams identify the best models that fit their budget without compromising on quality.

Consistency Monitoring

One of the standout features of OpenMark AI is its ability to monitor output consistency across multiple runs of the same task. This ensures that users can select models that deliver stable and reliable performance, a crucial factor for production-level applications.

Use Cases

Agenta

Collaborative Development for LLM Applications

Agenta is ideal for AI teams looking to collaborate effectively on LLM applications. By centralizing tools and resources, teams can work together in real time, iterating on prompts and sharing insights to build more reliable models.

Streamlined Debugging Processes

When issues arise in production, Agenta's observability tools allow teams to quickly identify and resolve failures. By tracing requests and analyzing failures systematically, teams can enhance the reliability of their LLM applications.

Evidence-Based Performance Evaluation

With Agenta, teams can conduct thorough evaluations of their models and prompts using automated and human evaluation methods. This evidence-based approach ensures that changes lead to measurable improvements in performance.

Integration with Existing Workflows

Agenta integrates seamlessly with popular frameworks and tools, such as LangChain and OpenAI, allowing organizations to leverage their current technology stack while enhancing their LLM development processes. This flexibility minimizes disruption and maximizes efficiency.

OpenMark AI

Model Selection for Product Development

Development teams can use OpenMark AI to assess which AI models best fit their specific application needs. By running targeted benchmarks, they can select the most suitable model that aligns with their project goals and user expectations.

Cost-Benefit Analysis for AI Implementations

Businesses looking to integrate AI can leverage OpenMark AI to analyze the true costs associated with different models. By comparing the quality of generated outputs against their costs, organizations can make strategic decisions that optimize their return on investment.

Quality Assurance Testing

Quality assurance teams can utilize OpenMark AI to ensure that their chosen AI models deliver consistent and high-quality outputs. This is vital for maintaining standards in applications where accuracy and reliability are paramount, such as customer service or content generation.

Pre-Deployment Validation

Before launching new AI features, teams can conduct extensive benchmarking using OpenMark AI to validate their choices. This ensures that the models selected will perform as anticipated under real-world conditions, allowing for a smoother deployment process.

Overview

About Agenta

Agenta is an innovative open-source LLMOps platform meticulously crafted to empower AI teams to efficiently construct and deploy trustworthy large language model (LLM) applications. It is designed for developers, product managers, and domain experts who are seeking a collaborative environment to streamline their workflows. Agenta addresses the challenges organizations face with the unpredictable nature of LLMs, which often leads to scattered prompts, siloed workflows, and chaotic iterations. By centralizing the development processes, Agenta provides a single source of truth, enabling teams to foster collaboration, maintain version control, and implement evidence-based evaluations. The platform transforms the debugging experience into a systematic analysis through its integrated observability features, allowing teams to monitor performance and make informed decisions. With Agenta, organizations can significantly enhance their LLM development efforts, ensuring reliability and efficiency from experimentation to deployment.

About OpenMark AI

OpenMark AI is a cutting-edge web application designed specifically for task-level benchmarking of large language models (LLMs). It empowers developers and product teams to evaluate and validate various AI models by allowing them to articulate their testing requirements in plain language. With OpenMark AI, users can conduct simultaneous comparisons across multiple models, assessing key performance metrics such as cost per request, latency, scored quality, and output stability across repeated tests. This comprehensive approach ensures that stakeholders gain insights into model variance rather than relying on potentially misleading single outputs. OpenMark AI simplifies the benchmarking process by eliminating the need for separate API keys from providers like OpenAI, Anthropic, or Google, making it easier to focus on critical pre-deployment decisions. It caters to those who prioritize cost efficiency in their AI implementations, ensuring that quality is evaluated relative to the expenditure involved. With a supportive catalog of models and flexible pricing plans, OpenMark AI is an indispensable tool for teams seeking to optimize their AI capabilities before launch.

Frequently Asked Questions

Agenta FAQ

What is LLMOps?

LLMOps refers to the operational practices and tools used to manage the lifecycle of large language models. It encompasses activities such as development, deployment, monitoring, and debugging to ensure reliable and efficient LLM applications.

Can Agenta integrate with other AI frameworks?

Yes, Agenta is designed for compatibility and can integrate with various frameworks and models, including LangChain and OpenAI. This ensures that teams can use their preferred tools while benefiting from Agenta's capabilities.

Is Agenta suitable for teams of all sizes?

Absolutely. Agenta is built to accommodate teams of any size, from small startups to large enterprises. Its collaborative features and centralized management make it ideal for diverse team structures and workflows.

How does Agenta support version control?

Agenta maintains a complete version history of prompts and evaluations, allowing teams to track changes over time. This feature ensures that team members can revert to previous versions if necessary, enhancing collaboration and reducing the risk of errors.

OpenMark AI FAQ

What types of benchmarks can I run with OpenMark AI?

OpenMark AI allows you to benchmark a wide range of tasks, including classification, translation, data extraction, research, and more. You can customize your benchmarks based on specific requirements.

Do I need to set up API keys to use OpenMark AI?

No, OpenMark AI eliminates the need for setting up separate API keys for different models. The application hosts the benchmarking environment, streamlining the process for users.

How does OpenMark AI ensure accurate benchmarking?

OpenMark AI ensures accuracy by conducting real API calls to various models during testing. This provides genuine insights into performance, cost, and quality, rather than relying on promotional figures.

Are there any costs associated with using OpenMark AI?

OpenMark AI offers both free and paid plans, allowing users to choose based on their benchmarking needs. You can access detailed pricing information within the application’s billing section.

Alternatives

Agenta Alternatives

Agenta is an innovative open-source platform specifically designed for large language model operations (LLMOps), catering to AI teams seeking to build and deploy reliable applications with unmatched efficiency. By centralizing the development process, Agenta promotes collaboration and streamlines experimentation, which is crucial for teams navigating the complexities of AI technologies. Users often seek alternatives to Agenta due to factors such as pricing, specific feature sets, or tailored platform needs that better align with their unique operational requirements. When choosing an alternative, it is essential to consider aspects such as ease of use, integration capabilities, support services, and the overall ability to meet the unique demands of your team’s AI development process.

OpenMark AI Alternatives

OpenMark AI is a sophisticated web application tailored for benchmarking large language models (LLMs) on specific tasks. It allows users to input their testing criteria in plain language, facilitating side-by-side comparisons of over 100 models based on cost, speed, quality, and stability. As a premier tool in the dev tools category, OpenMark AI is designed for developers and product teams seeking to validate models before deploying AI features. Users often search for alternatives to OpenMark AI due to various factors such as pricing structures, feature sets, or specific platform requirements. When considering alternatives, it is essential to evaluate the breadth of model support, the ease of use, and the ability to generate reliable, consistent results across multiple test runs. These criteria ensure that users can effectively determine the most suitable model for their needs while maintaining a focus on cost efficiency and quality.